JasperReports Serveruses UTF-8 (8-bit Unicode Transformation Format) character encoding. If your database server or application server uses a different character encoding form, you may have to configure them to support UTF-8. This section provides information for configuring the character encoding for several application servers and database servers. If you use a different application server or database, and its default character encoding isn’t UTF-8, you may need to make similar updates to support certain locales. For more information, refer to the documentation for your application server or database. Tomcat By default, Tomcat uses ISO-8859-1 (ISO Latin 1) character encoding for URIs, which is sufficient for Western European locales, but does not support many locales in other parts of the world.

If you plan to support locales that Latin 1 does not support, you must change Tomcat’s URI encoding format. If you chose the instance of Tomcat that is bundled with the installer, you do not need to make this change. The bundled Tomcat is pre-configured to support UTF-8. If you installed the WAR file distribution with your own instance of Tomcat and want to support UTF-8, perform the following procedure. To configure Tomcat to support UTF-8: 1. Open the conf/server.xml file and locate the following code.

Save the file. Restart Tomcat. JBoss Since JBoss uses Tomcat as its web connector, the configuration changes in ) also have to be made for JBoss. The only difference is that the server.xml file is located in the Tomcat deployment directory, typically server/default/deploy/jbossweb-tomcat55.sar. Make the same configuration changes, then restart JBoss. PostgreSQL JasperReports Server requires PostgresSQL to use UTF-8 character encoding for the database that stores its repository as well as for data sources.

A simple way to meet the requirement is to create the database with a UTF-8 character set. For example, enter the following command: create database jasperserver encoding =’utf8’; MySQL By default, MySQL uses ISO-8859-1 (ISO Latin 1) character encoding. However, JasperReports Server requires MySQL to use UTF-8 character encoding for the database that stores its repository as well as for data sources. The simplest way to meet the requirement is to create the database with a UTF-8 character set. For example, enter the following command: create database jasperserver character set utf8; To support UTF-8, the MySQL JDBC driver also requires that the useUnicode and characterEncoding parameters be set as in this startup URL: url='jdbc:mysql://localhost:3306/jasperserver?useUnicode=true&characterEncoding=UTF-8' If the MySQL database is a JNDI data source managed by Tomcat, such as the JasperReports Server repository database, the parameters can be added to the JDBC URL in WEB-INF/context.xml. The following is a sample resource definition from that file.

JBoss ignores the context.xml file, instead requiring an XML file to define JNDI data sources in the deployment directory, which is typically server/default/deploy. The following is an example of a resource definition in one of those XML files. Jdbc:mysql: //localhost/jasperserver?useUnicode=true&characterEncoding=UTF-8 If the database is a JDBC data source configured in the repository, change the JDBC URL by editing the data source in the JasperReports Server repository. The following is an example of the JDBC URL (note that the ampersand isn't escaped): jdbc:mysql: //localhost:3306/foodmartja?useUnicode=true&characterEncoding=UTF-8 Oracle Oracle databases have both a default character set and a national character set that supports Unicode characters. Text types beginning with N (NCHAR, NVARCHAR2, and NCLOB) use the national character set. As of JasperServer 1.2, all the text data used by the JasperReports Server repository (when stored in Oracle) is stored in NVARCHAR2 columns, so that JasperReports Server metadata can use the full Unicode character set. For more information about Unicode text support, refer to the.

To work properly with Unicode data, the Oracle JDBC driver requires you to set a Java system property by passing the following argument to the JVM: -Doracle. DefaultNChar = true In Tomcat, add the variable to JAVAOPTS in bin/setclasspath.sh (Linux) or bin/setclasspath.bat (Windows): 1. Vector background psd files. Locate the following line in the script: Linux # Set the default -Djava.endorsed.dirs argument Windows rem Set the default -Djava.endorsed.dirs argument 2. Add the following line before it: Linux JAVAOPTS='$JAVAOPTS '-Doracle.jdbc.defaultNChar=true Windows set JAVAOPTS=%JAVAOPTS% -Doracle.jdbc.defaultNChar=true Since JBoss also uses JAVAOPTS to pass options to the JVM, you can add the same JAVAOPTS line to bin/run.sh (Linux) and bin/run.bat (Windows). Add it before this line: Linux # Setup the java endorsed dirs Windows rem Setup the java endorsed dirs.

Those characters (well, the Polish ones - things will be very different for Vietnamese) are in the 01xx code page (extended Latin), which means they should be available in all major fonts. My Helvetica sure has them. You could check (in a hex editor) that the character codes in the XSL-FO file are the same ones they were in the original XML file, and that the encoding is still 'UTF-8'. What happens if Acrobat doesn't support that font? It's not so much a question of Acrobat not supporting the font - it's a question of whether the machine displaying the PDF has that font. Apparently not a problem for Polish, but it may well be for Vietnamese.

The solution would be to embed the font in the PDF document (making it substantially larger, especially for something like Vietnamese). Not sure how to specify that with FOP, but as I recall, it can be done. I looked the hex values for the characters in the resulting XSL-FO and the XML and they have the same values.

I did notice that once the document opens in Acrobat, I mouse over the document and right click and go to properties and select the font tab, it lists the fonts being used in the document and lists them all as being encoded in Ansi. I am using Acrobat 8. I don't understand why the encoding is listed as Ansi? Did I miss a step or a tag or something? I also get error during the transformation of the XML: ERROR unknown font Times New Roman,normal,normal so defaulted font to any I also get the same error when I try to use Arial, but not Helvetica. It's as if Acrobat doesn't know to use UTF-8 encoding. I put the encoding tag at the beginning of the XML file and the XSL file.

I also added the xsl:output tag. Also, when I transform the XML and XSL, I do this: transformer.setOutputProperty(OutputKeys.ENCODING, 'UTF-8'); Is there anyting else I have to do? Did I miss something?

It's looking more and more like this is a question about Acrobat. It's still possible that whatever you're using to convert XSL-FO to PDF is failing to set some PDF parameter, but if you're uniformly seeing # for any non-Latin-1 character then that doesn't suggest any character encoding problem to me. It's more like a deliberate choice by Acrobat. The 'ANSII' thing you found implies that, too. We could move this to a forum that's about Acrobat rather than leaving it in the XML forum, if you'd like. Just let us know.

I think the XML aspect has pretty much been beaten to death. I figured out the solution to this problem.

I was using an outdated version of apache's FOP package. I upgraded to FOP V.95. I also had to set the WebSphere's JVM setting to UTF-8 through the admin console. They say to use -Dclient.encoding.override=UTF-8 but that did not work. I had to use -Dfile.encoding=UTF-8. I also had to follow the instructions on Apache's website to embed a font with the XSL. Even though Adobe uses character sets that can handle UTF-8, I had to embed the font anyway in order to make the full character set available.

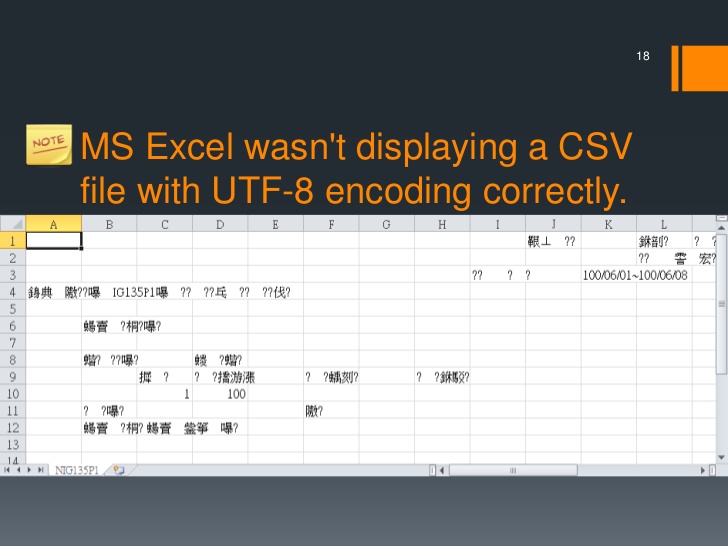

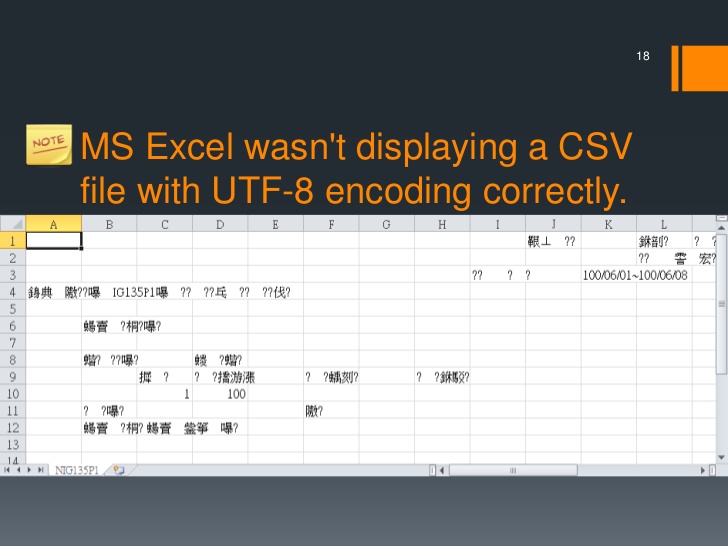

My experience has been, if the characters are being replaced with?s then there is a character mapping issue on the server. If the characters are replaced with #s then there is a character mapping issue in the PDF. Torrent george harrison all things must pass. I just want to say thanks to everyone who responed.

The replies helped point me in the right direction. I just wanted to provide an update to the issue in case someone else stumbles on this page and is trying to do the same thing. Thanks again, Chris.

As I am new, first of all I would like to thank the creators of JasperReports for this wonderful and powerful reporting engine. You guys rock! Now, the problem. I have a report that uses some letter one cannot find in Latin alphabet (I using polish and german letters in the tests). The output is ok in most export formats, but PDF. I set the isPdfEmbedded to true (not sure at all if this is required), pdfFontName is the default (Helvetica for me), and pdfEncoding is UTF-8.

Now, UTF-8 is not on the list of allowed encodings, I put it there the hard way editing the list. When I export it to PDF (using iReport PDF preview) I can only see standard latin characters the special one simply do not appear on the PDF output. When I change the encoding to Identity-H (Unicode with horizontal writing) the internal preview looks fine, but PDF prints nothing for this field. When I change it to cp1250 (central europe) it works fine, but it is not the ideal solution as I expect that our system might be used in French or Spain, and their character would not be displayed. What can I do to fix this? Please see the file attached. I have the same problem ( see my posting about FontSelector support in this forum.

I have no solution for this for now. Here is what I think what our problem is: The builtin PDF fonts like Helvetica in your sample have all the characters we need ( i.e. All western and central european characters ) but they do not have a unicode encoding. There is a CP1252 encoding for western characters and CP1250 for central european characters. So you have to specify the matching encoding to get your characters printed. The itext package, which is used in jasperreports to provide PDF support, has a solution for this called FONTSelector.

You create a FONTSelector and add all required encodings. Then you pass your text to this FontSelector and it will return a decorated text phrase with the required encoding specifications. This phrase is then added to the document and the output is ok. I tried this with a simple java program calling itext directly. I have no idea yet how I could do this in JasperReport or even iReport There is a alternative way to tackle the problem: Buy or otherwise get a font with UTF encoding. With this we should be able to print all european characters in a single string using the Identity-H encoding.

Encoding Windows 1252

But you have to embed your font if you want others to read your document (, if your font license allows embedding ). I will try this the next days and post my results here, if you are interested.

Regards Christoph OK, I tried TrueType fonts with pdf exporter and it worked ok. Using iReport 3.1.3-nb: Include the path to your font directory ( for me it was c: windows fonts ) in classpath ( Menu bar: tools-options-classpath tab ). After doing so, in the fontpath tab in the same option window this path shows up too and must by checked to be searched for fonts by the iReport.

Strategy war games pc. Now all TTF fonts show up in the pull down list of PDFFont property sheet. I selected arial.ttf. I also chose IDENTITY-H as PDFencoding. After doing so the PDF displays characters like the czech 'R caron' ( Unicode u0158 ) or the spanish ' n tilde' ( Unicode u00f1 ) in a single field.

The exporter automatically embedded a subset of the arial TrueType within the pdf document. This solves the problem if you have a suitable ttf fonts available and accept these fonts to be embedded within your documents. Personally I would prefer the FontSelector method mentioned above, but I still have no idea how to do this with iReport/JasperReports. Post Edited by ChristophLeser at 17:52. Hi, JasperReports now has support for what we call 'font extensions'. A font extension is a way to package up TTF files and make sure they are available to the JVM for all the font metrics required and to the reporting engine at PDF export time. Check the /demo/fonts folder of the JR project to see the content of a font extension adding to font families that are used by almost all JR samples.

Unmappable Character For Encoding Utf-8

When you use properly configured font extensions, you'll be taking care only of the fontName attribute and no other attributes would be required, as they are already configured in the font extension file. I hope this helps.